Patrick Amadeus Irawan

About Me

-

I am a PhD student at MBZUAI, advised by Alham Fikri Aji and Yova Kementchedjhieva. My work focuses on multimodal learning, post-training and alignment, grounded vision-language systems, and large-scale evaluation.

-

Previously, I was a Research Engineer at Singapore Management University, where I worked on multilingual and multimodal interpretation with Chong-Wah Ngo. Before that, I earned my B.Eng. in Computer Science from Institut Teknologi Bandung, where I worked with Ayu Purwarianti on synthetic data generation for explainable multimodal reasoning.

-

I further aim to build multimodal systems that reason effectively across diverse perceptual / action-based inputs without relying on modality-specific shortcuts.

Research Interests

I am interested in building multimodal systems that reasonably leverage its perceptual & semantic inputs to make grounded decisions and responses, rather than relying on shortcuts from a single modality.

- Modality Utilization.

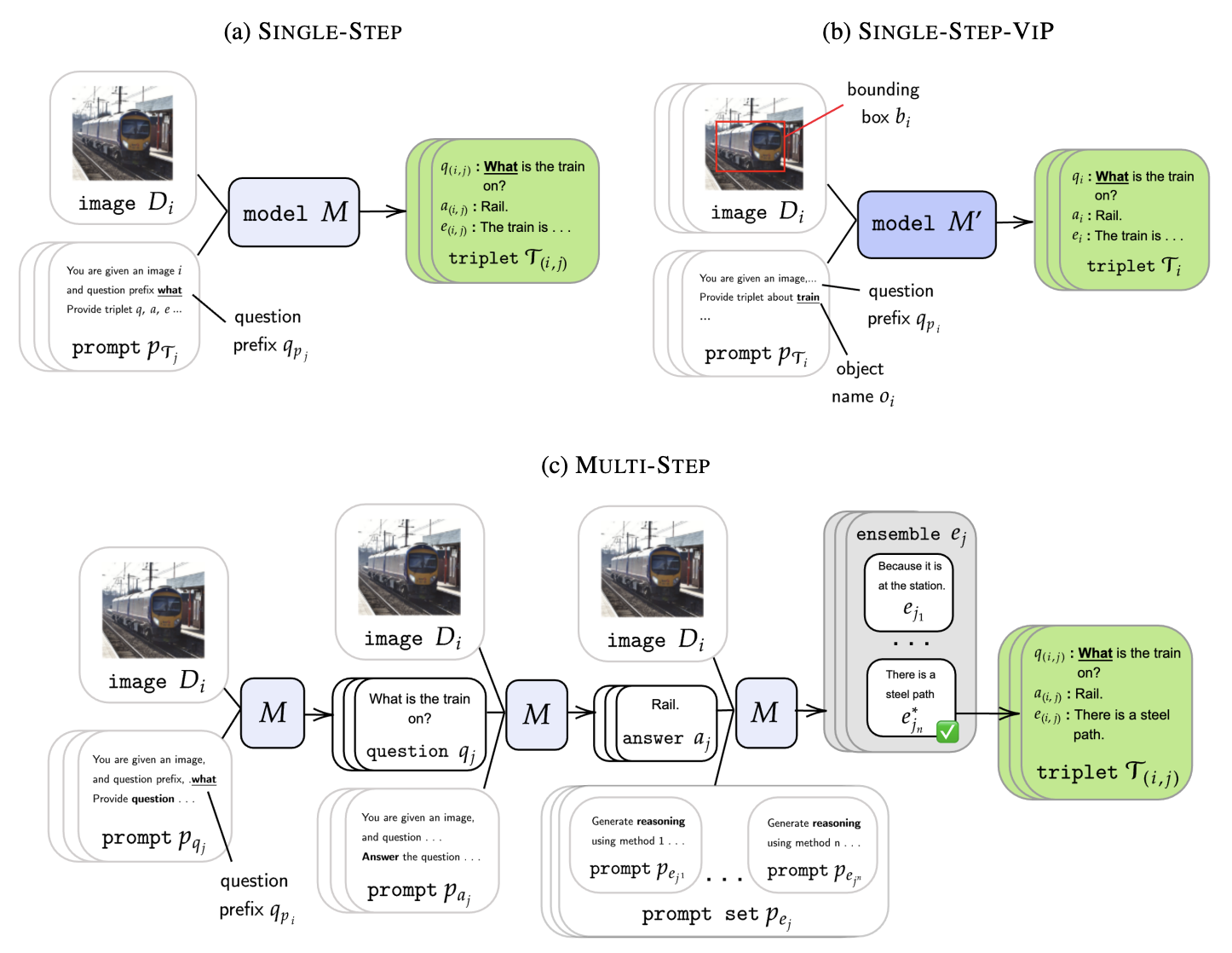

I study how multimodal models decide what to attend to, and why they often rely on dominant signals (e.g., language) instead of fully using other multimodal inputs. This leads to shortcut learning, hallucination, and weak grounding.- Synthetic-VQA-NLE: synthetic explainable VQA generation pipeline, evaluates VQA reasoning of SOTA VLMs (at the time)

- SeeingCulture: shows how lack of domain awareness harms visual grounding and segmentation ability

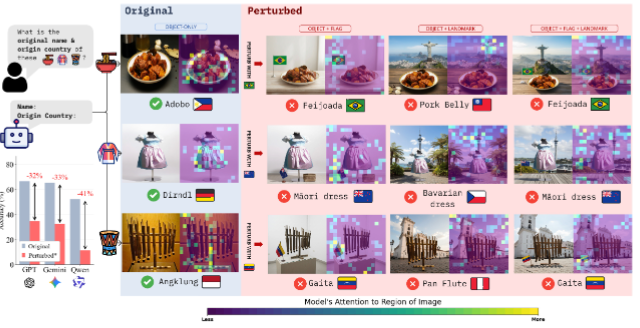

- ConfusedTourists: evaluates how semantically-aligned perturbation context changes trigger biased visual grounding

- CountingTricks: exposes straightforward perceptual counting ability of VLMs and how attention-balancing RL post-training may help mitigate this

- Post-Training & Cross-Modal Alignment.

To address these issues, I work on post-training methods that improve how models use and connect modalities, including distillation and dynamic supervision signals (action-conditioned signals, RL). The goal is to recover missing abilities and strengthen cross-modal alignment.- LinguDistill: uses cross-modal distillation to recover degraded language abilities in VLMs

- Synthetic-VQA-NLE: also supports more grounded supervision signals

- Ongoing: world model evaluation for plan–action consistency, and memory-based VLMs to improve long-term grounding

- Large-Scale Evaluation.

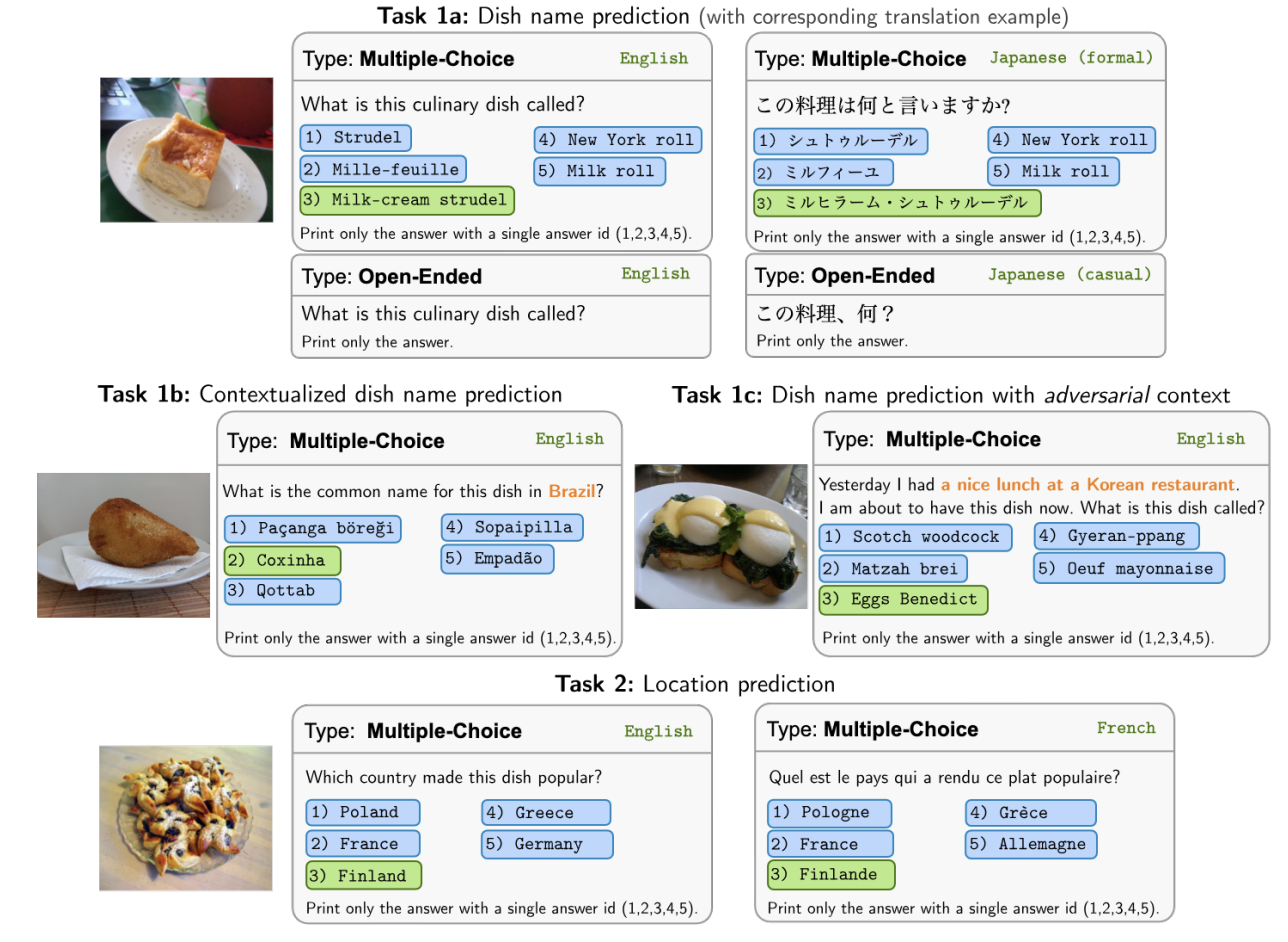

I also keen on being critical at designing faithful large-scale evaluation setups to understand how models behave under distribution shifts, missing modalities, or limited resources, with focus on reliability and robustness at scale.- WorldCuisine: benchmarks domains-specific cultural and multilingual reasoning in VQA

- SEACrowd: builds large-scale multimodal datasets hub for underrepresented languages in 4+ modality mixtures

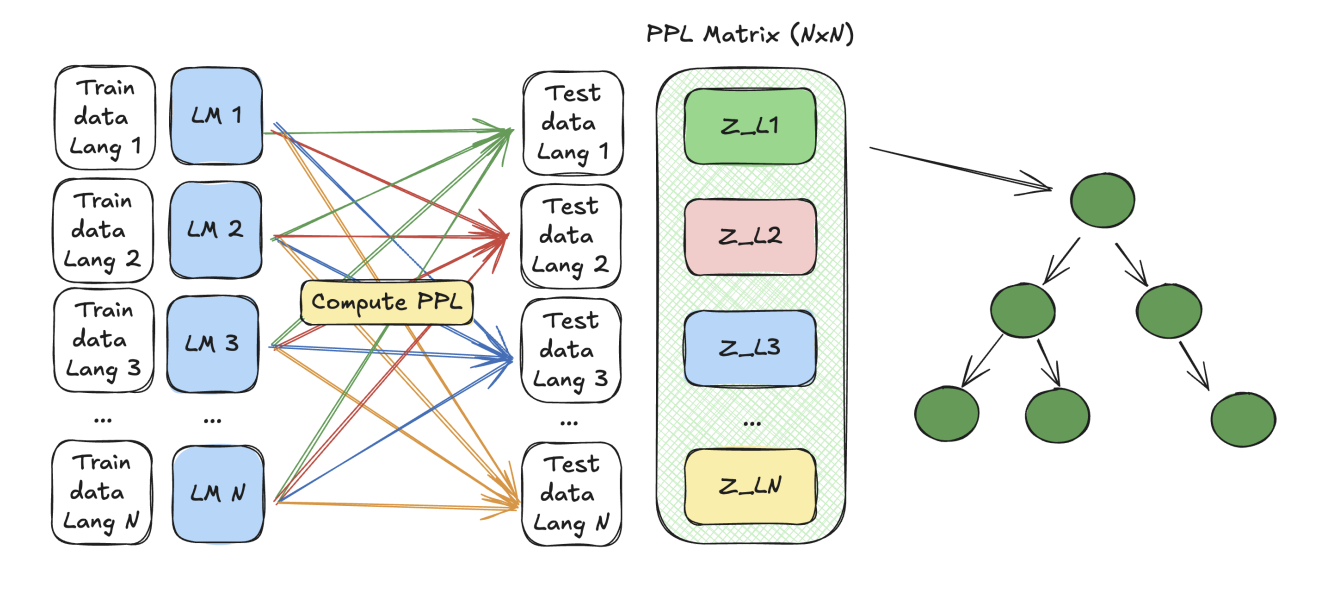

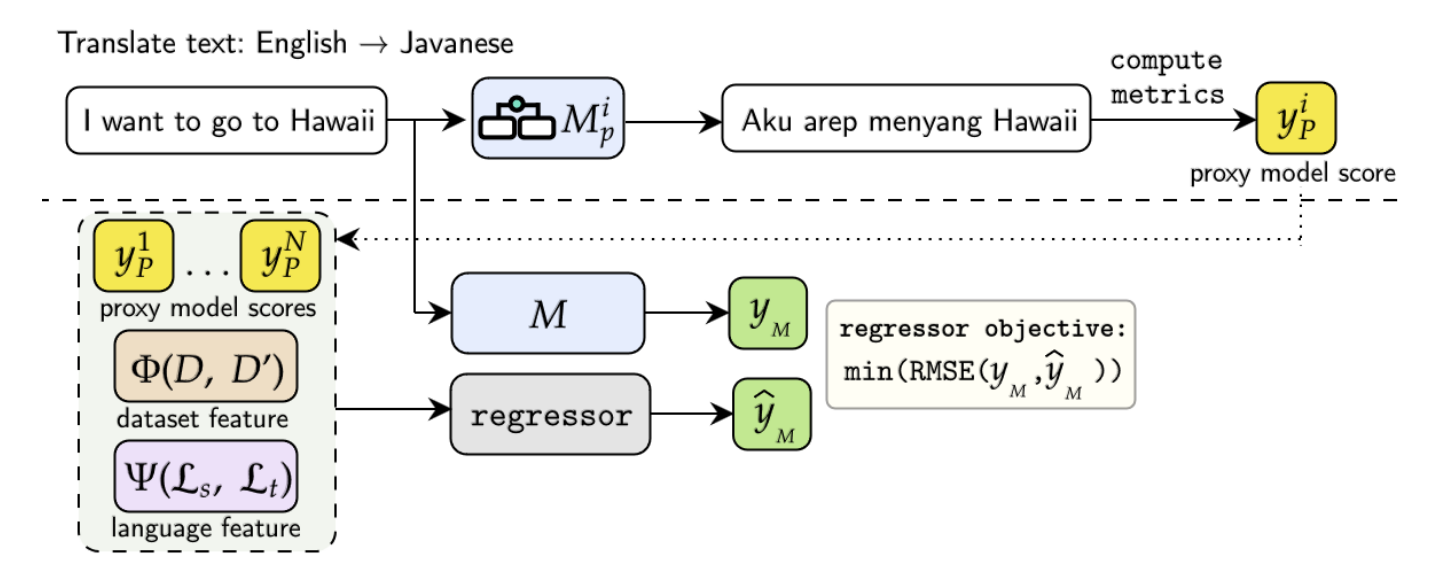

- ProxyLM: efficient multilingual performance prediction system leveraging data and language features

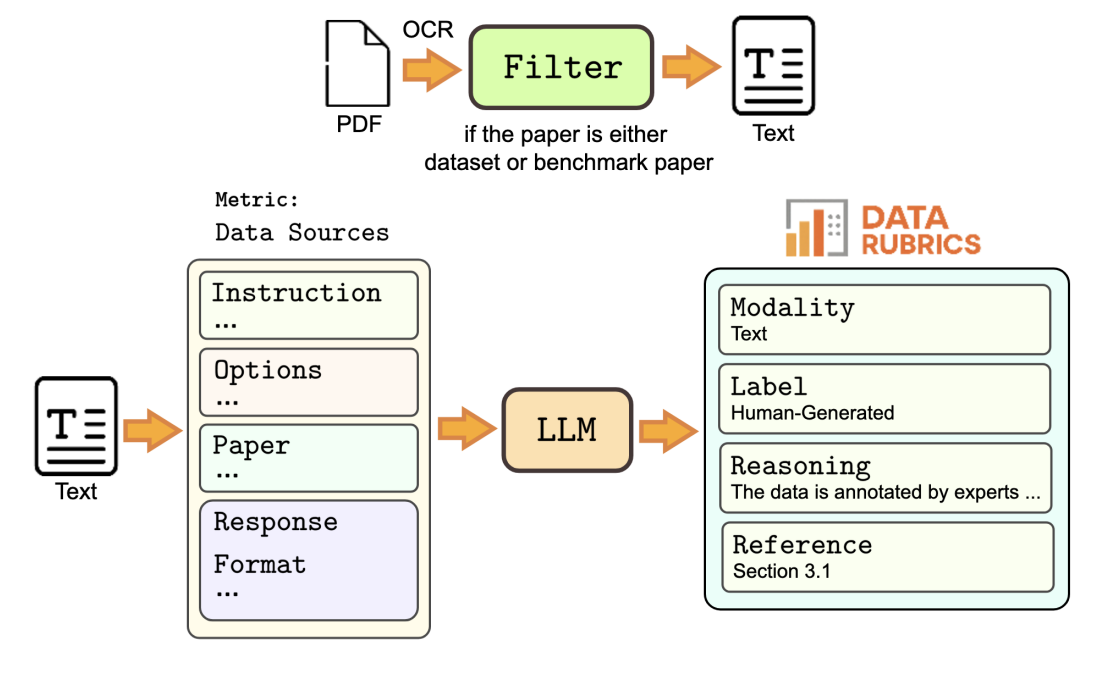

- DataRubrics: studies data quality and faithful benchmark creation aspects

Updates

- [Apr. 2026] LinguDistill is out on arXiv. We study how selective cross-modal distillation can recover linguistic ability in VLMs while preserving multimodal competence 🧠

- [Feb. 2026] 2 papers accepted to CVPR 2026! M4-RAG gets in as a main paper, and Vision Language Models are Confused Tourists appears in findings 🎉

- [Nov. 2025] Our study exposing the confusion of VLMs in cultural-conflict visual scenarios, Vision Language Models are Confused Tourists, is up on arXiv 🧳

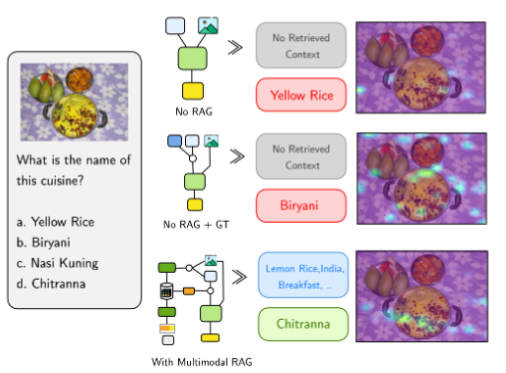

- [Dec. 2025] M4-RAG is out on arXiv! We present an evaluation of how multimodal knowledge enrichment helps models tackle multilingual queries. Spoiler: it does not always help… 🤯

- [Oct. 2025] Entropy2Vec got accepted into MRL Workshop @ EMNLP 2025 🌐🇨🇳

- [July 2025] Seeing Culture Benchmark is accepted to EMNLP 2025 🇨🇳 On to the next one with the SMU Multimedia team 💪

- [May. 2025] DataRubrics is now on arXiv! We propose a unified scorecard to evaluate data quality on multi-faceted metrics 📊

- [Apr. 2025] WorldCuisines receives Best Theme Paper at NAACL 2025 🎉🌏🍽️

- [Mar. 2025] Admitted to the Fall 2025 cohort of the MBZUAI PhD program in NLP 📚

- [Jan. 2025] WorldCuisines and ProxyLM are accepted to NAACL 2025 🇺🇸🎖️

- [Nov. 2024] My first first-author paper, Towards Efficient and Robust VQA-NLE Data Generation with Large Vision-Language Models, is accepted to COLING 2025 🎉

- [Oct. 2024] WorldCuisines, the largest multicultural VL food benchmark, is released. Honored to co-lead the project 🥘

- [Sep. 2024] SEACrowd is accepted to EMNLP 2024 🇺🇸

Publications

2026

-

Preprint

Preprint, 2026.Proposes a selective distillation strategy to recover linguistic competence in VLMs without giving up multimodal capability.

Preprint

Preprint, 2026.Proposes a selective distillation strategy to recover linguistic competence in VLMs without giving up multimodal capability. -

CVPR 2026 Findings

Computer Vision and Pattern Recognition Conference (CVPR), 2026 Findings.Studies how VLMs misread culturally conflicting visual situations, exposing grounding failures that are invisible to standard benchmarks.

CVPR 2026 Findings

Computer Vision and Pattern Recognition Conference (CVPR), 2026 Findings.Studies how VLMs misread culturally conflicting visual situations, exposing grounding failures that are invisible to standard benchmarks. -

CVPR 2026

Computer Vision and Pattern Recognition Conference (CVPR), 2026.Evaluates whether multimodal retrieval actually helps multilingual and multicultural question answering at scale, and where it fails.

CVPR 2026

Computer Vision and Pattern Recognition Conference (CVPR), 2026.Evaluates whether multimodal retrieval actually helps multilingual and multicultural question answering at scale, and where it fails.

2025

-

EMNLP 2025

Conference on Empirical Methods in Natural Language Processing (EMNLP), 2025.Builds a benchmark for culture-sensitive visual reasoning and grounding, pushing evaluation beyond object recognition into contextual interpretation.

EMNLP 2025

Conference on Empirical Methods in Natural Language Processing (EMNLP), 2025.Builds a benchmark for culture-sensitive visual reasoning and grounding, pushing evaluation beyond object recognition into contextual interpretation. -

MRL @ EMNLP 2025

Entropy2Vec: Crosslingual Language Modeling Entropy as End-to-End Learnable Language RepresentationsMultilingual Representation Learning Workshop at EMNLP, 2025.Introduces entropy-based crosslingual representations that treat language modeling uncertainty as an end-to-end learnable signal.PDF Poster

MRL @ EMNLP 2025

Entropy2Vec: Crosslingual Language Modeling Entropy as End-to-End Learnable Language RepresentationsMultilingual Representation Learning Workshop at EMNLP, 2025.Introduces entropy-based crosslingual representations that treat language modeling uncertainty as an end-to-end learnable signal.PDF Poster -

NAACL 2025

North American Chapter of the Association for Computational Linguistics (NAACL), 2025.Co-leads a benchmark that tests multilingual and multicultural VQA through food, culture, and visual context rather than English-centric priors.

NAACL 2025

North American Chapter of the Association for Computational Linguistics (NAACL), 2025.Co-leads a benchmark that tests multilingual and multicultural VQA through food, culture, and visual context rather than English-centric priors. -

NAACL 2025

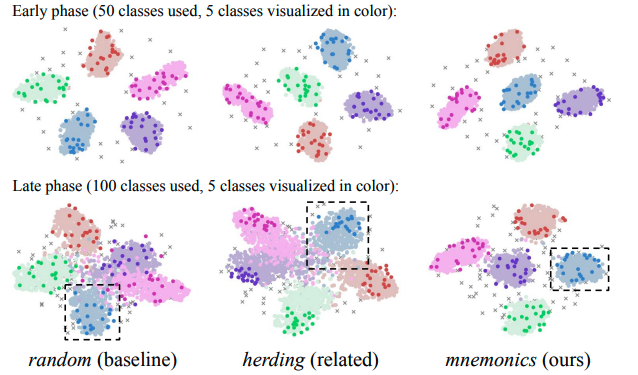

North American Chapter of the Association for Computational Linguistics (NAACL), 2025.Predicts multilingual model performance with cheaper proxy models, reducing evaluation cost when exploring large design spaces.

NAACL 2025

North American Chapter of the Association for Computational Linguistics (NAACL), 2025.Predicts multilingual model performance with cheaper proxy models, reducing evaluation cost when exploring large design spaces. -

COLING 2025

International Conference on Computational Linguistics (COLING), 2025.Develops a more efficient pipeline for generating VQA explanations with VLMs, improving synthetic supervision for grounded reasoning.

COLING 2025

International Conference on Computational Linguistics (COLING), 2025.Develops a more efficient pipeline for generating VQA explanations with VLMs, improving synthetic supervision for grounded reasoning. -

Preprint

Preprint, 2025.Proposes an automated scorecard for dataset quality and accountability, making data auditing more systematic and comparable.

Preprint

Preprint, 2025.Proposes an automated scorecard for dataset quality and accountability, making data auditing more systematic and comparable.

2024

-

EMNLP 2024

Conference on Empirical Methods in Natural Language Processing (EMNLP), 2024.Contributes to a multilingual multimodal data hub and benchmark suite centered on Southeast Asian languages, expanding evaluation beyond high-resource settings.

EMNLP 2024

Conference on Empirical Methods in Natural Language Processing (EMNLP), 2024.Contributes to a multilingual multimodal data hub and benchmark suite centered on Southeast Asian languages, expanding evaluation beyond high-resource settings. -

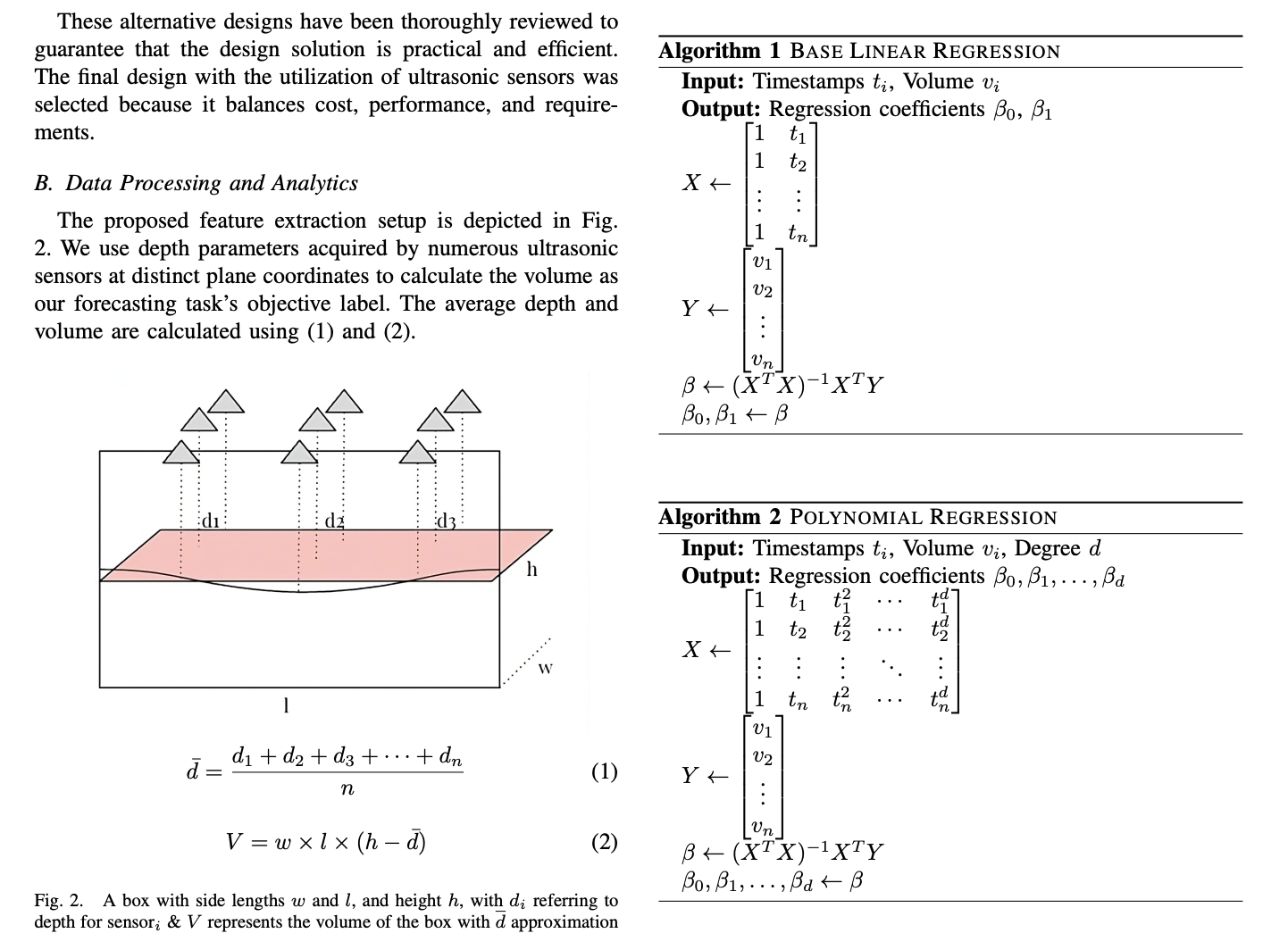

APSIPA ASC 2024

Asia Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), 2024.Applies machine learning and sensor systems to real-world stock monitoring, showing the engineering side of my research background.PDF Oral

APSIPA ASC 2024

Asia Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), 2024.Applies machine learning and sensor systems to real-world stock monitoring, showing the engineering side of my research background.PDF Oral

Experience & Service

Selected Experience

- Research Engineer @ Singapore Management University (2025 - 2025)

- Software Engineer @ IT Bauschmiede (2024 - 2025)

- Data Scientist Intern @ Supertype (2023 - 2023)

- Software Engineer Intern @ Blibli (2022 - 2022)

- Software Engineer Intern @ Ruangguru (2022 - 2022)